Vox clamantis in deserto

David Boutt: Of droughts and downpours in New England and beyond

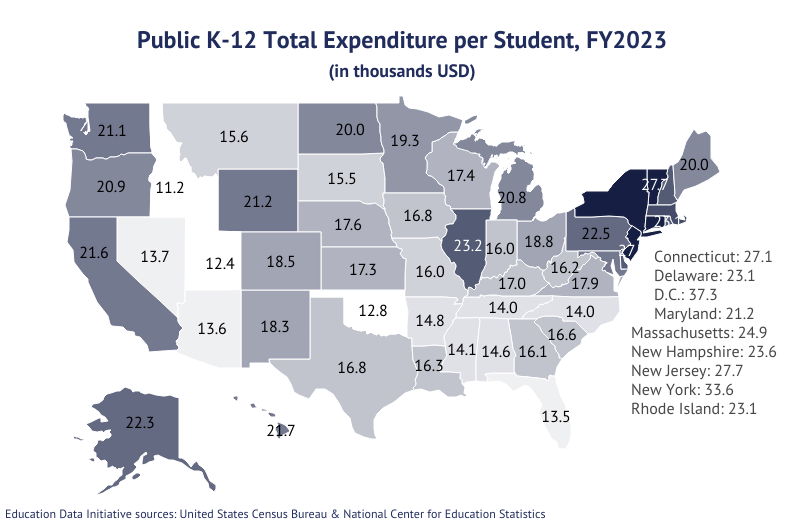

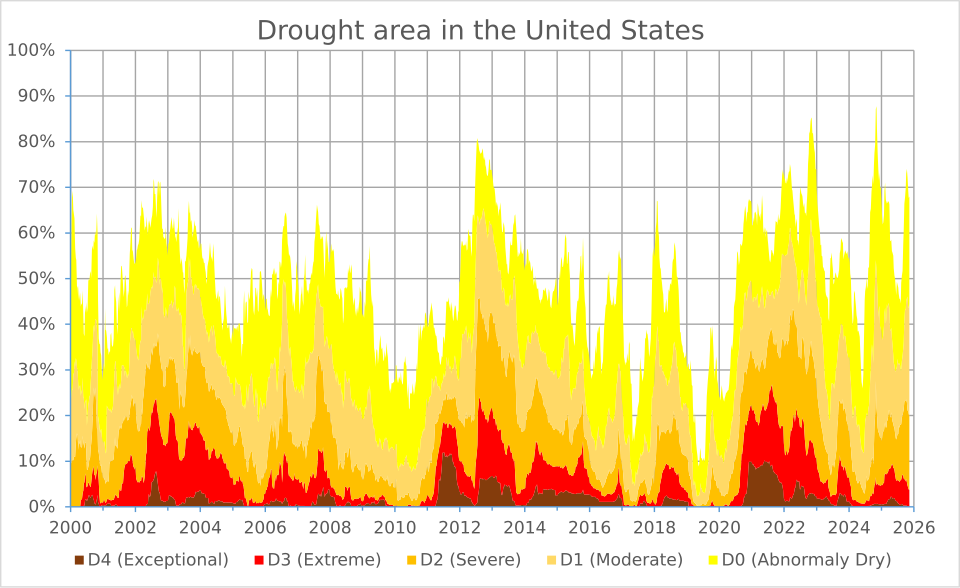

Percentage area in U.S. Drought Monitor categories since 2000.

From The Conversation, except for image above.

David Boutt is a professor of hydrogeology at the University of Massachusetts at Amherst. He receives funding from the U.S. Geology Survey.

About two-thirds of the U.S. is in some stage of drought in late spring 2026, yet at the same time the country has been seeing more intense downpours. It might seem contradictory, but both are symptoms of rising global temperatures.

The reason has to do with the water cycle.

Water influences every aspect of our lives through a delicate cycle that transforms liquid water into vapor and back again.

As the Earth warms, more of that precipitation is arriving in intense storms that deliver more water than the landscape can handle. When storms drop a few inches of rain over a few days, the water sinks into the soil, nourishing plants and replenishing groundwater. But during heavy downpours, the rain can’t sink in fast enough, and much of the water runs off instead, often fueling flooding.

Water also evaporates faster in warmer temperatures. So, despite an increase in total annual precipitation nationally, the landscape is drying out more rapidly as temperatures rise, resulting in more severe and frequent droughts.

My colleagues and I are documenting these broad shifts and what they mean for the future of the terrestrial hydrological cycle – the water cycle on land – and the people and ecosystems that depend on it. The effects are occurring across climates around the world.

Hydrological cycle out of sync

Fundamentally, the terrestrial hydrological cycle is controlled by two things: precipitation that adds moisture to the ground and evapotranspiration, meaning water that evaporates either from the land back into the atmosphere or from plants releasing it through their leaves.

Over the long term, the total amount of precipitation that falls, minus the total evapotranspiration sending moisture back into the atmosphere, determines how much water moves through the hydrologic system. That affects stream flow, soil moisture and the amount of water sinking into the ground and recharging aquifers.

During heavy precipitation in the U.S. Northeast, water is rapidly routed through the shallow subsurface rather than reaching deeper soil and groundwater storage. Julianna C Huba, et al., 2026

When this balance shifts or becomes out of sync with its natural state, it affects how water moves through the landscape. And that directly influences where water is available and how much is there.

These shifts in precipitation are occurring alongside longer growing seasons that allow the land to accumulate more heat. As temperatures rise, drier air also pulls more water from the landscape, increasing the risk of drought.

The changing timing of precipitation can result in counterintuitive feedbacks, as recent studies in the Northeast have shown.

In one study, scientists at Harvard Forest found that more intense storms are delivering greater amounts of water at rates exceeding the soil’s capacity to retain it. For example, in 2023 they found that high-intensity events in their research area made up about 42% of the year’s total precipitation.

When more precipitation is concentrated, with long gaps between storms, the surface soils have time to drain and dry out. This has contributed to drier atmospheric conditions as less water is available to evaporate from the land.

This effect from bursts of heavy rain with dry periods in between shows up in data. My research group at UMass found in a separate study that while wet years in the Northeast are becoming more frequent, dry years are also becoming more frequent.

Data collected by scientists with Harvard Forest, near Petersham, Mass., from 1964 to 2023 shows how precipitation has been increasing, with a large percentage of it coming from downpours. Samuel Jurado and Jackie Matthes, 2025, CC BY-NC-SA

During the wettest years over the past decade, we found an accumulation of approximately 2 inches of water in the shallow ground, contributing to higher water tables, more frequent flooding and damage to infrastructure during heavy rainstorms.

Conversely, during dry periods the landscape dries out rapidly, resulting in drought advisories, fires, water restrictions and crop failures in what is normally one of the wetter regions of the U.S.

Finding solutions

Many states are now incorporating climate science into decisions about infrastructure and land use to better understand the risks ahead. Massachusetts, for example, created a climate data clearinghouse to make research and data widely available. It also invested in computer models to examine potential future scenarios of water storage on the landscape so communities and farmers can prepare.

Communities can boost their resilience to extreme storms with urban designs and construction that take flood risk into account, include careful drainage as more areas are paved and add features such as rain gardens, riverside parks and bioswales that move and hold more water where needed.

To manage dry years, communities can implement conservation measures, such as limiting outdoor watering, subsidizing low-flow toilets and showers, and using water pricing to encourage more careful use. They can also teach residents how to use less water and generally be more mindful of water use.

On a larger scale, a new study using computer models indicates that more aggressive efforts to reduce the drivers of climate change – particularly reducing greenhouse gas emissions from burning fossil fuels – can reverse the trend of extreme precipitation, eventually returning to rates seen in the 20th century.

Until that happens, however, the world will have to adapt to a changing hydrological cycle.

Stephen Chen: How an ancient Chinese philosopher speaks to Americans’ college-rankings obsesssion

The flags of the eight Ivy League universities flying over Wien Stadium, at Columbia University, in New York city.

— Photo by Kenneth C. Zirkel

=

From The Conversation (not including image above).

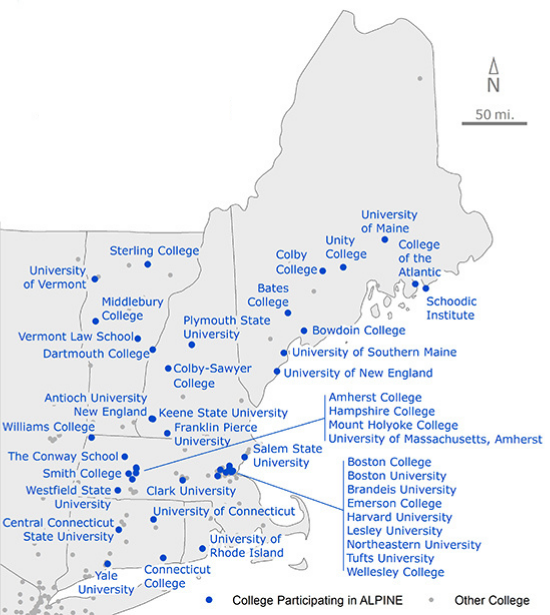

Stephen Chen is an associate professor of psychology at Wellesley College.

Preparation of this essay was supported in part by a grant from the Asian Pacific American Religions Research Initiative.

(Editor’s note; Ivy League universities are about to announce the number of high-school students who applied to them for admission to the Class of 2030.)

WELLESLEY, Mass.

Each March, many of the country’s most selective colleges and universities release their admissions decisions, reviving debates over the roles of race, wealth and privilege – and putting Americans’ cultural obsession with rankings back in the spotlight.

Meanwhile, a more personal set of questions will emerge in many homes and schools. Who got into a “better” school, and why? And for those who didn’t, what to do with a dream school deferred? What’s missing are more fundamental questions about the costs of striving for status and how to know when to stop.

From my former life as a college counselor to my current one as a psychology professor, I’ve spent more than two decades working with Asian-American families, the demographic group that often finds itself at the center of college admissions debates. I listen as they grapple with questions of race, social status and who makes it in the U.S. and why. I’ve also seen firsthand, both inside and outside of the research lab, how some students’ never-ending quest for achievement takes a toll on their mental health.

Americans’ frenzy over college admissions may be a relatively modern affliction, but striving for status is timeless and universal, and it can benefit from the wisdom of ancient texts. This is why, in my team’s research with Asian-American families, we bring the Chinese philosopher Laozi into the conversation. Through the Daodejing, one of the central texts of Daoism, Laozi offers perspectives from a tumultuous period of status-striving in Chinese history – and shifts our focus from comparison and competition to contentment.

The ‘success frame’

In interviews with Asian American parents, children and teens over the past 10 years, I hear echoes of what sociologists Jennifer Lee and Min Zhou call the “Asian American success frame”: success defined by elite educational credentials, graduate degrees and select occupations. Their research shows how the success frame is endorsed by Asian Americans across different ethnic groups, generations and socioeconomic brackets.

My team’s ongoing interviews, in turn, provide a window into how that idea of success is promoted. One mother told her 11-year-old son her wish is for him not to pursue an M.D. or a Ph.D., but both. Another parent of a 16-year-old with college applications on the horizon discouraged her from applying to state schools, because she had heard that some job recruiters consider only Ivy League resumes.

Future graduates wait for the procession to begin for the 2010 commencement ceremony at Yale University in New Haven, Conn. AP Photo/Jessica Hill

These conversations rarely mention the toll of chasing these highly specific, highly ambitious benchmarks of success. Rather, it comes to light when we talk with parents one-on-one about their own experiences. One lamented being a doctor, but not the “right kind” of doctor; another mentioned getting a Ph.D., but not from the best school; yet another described landing the job they sought when they immigrated to the U.S., only to run up against “bamboo ceilings” in their career.

Each of these comparisons involves relative or subjective social status: not how much education, wealth or prestige people actually have, but how much they think they have, relative to others. Decades of research indicate that thinking you have lower relative status takes a unique toll on mental and physical health.

I see this in my lab’s studies, as well: Parents who perceive themselves as being lower in subjective social status report more depressive symptoms, and children who perceive themselves as having low relative status report more loneliness, even when accounting for families’ actual levels of income and education.

Likewise, scholars Zhou and Lee identify similar struggles among Asian-Americans shouldering the weight of these social comparisons. A woman who attended a lower-ranked college than her family members told researchers she “feels like the ‘black sheep’ of the family”; a man rejected from elite Ph.D. programs considers himself a failure for “only having a B.A.”

The unending climb of status comparisons can be a crushing load – and this is where Laozi comes into the conversation.

Dangers of desire

By some accounts, Laozi was a contemporary of Confucius in the sixth century B.C.E. – though the details of his biography are more legendary than factual.

Traditionally, he has been venerated as the author of the Daodejing, a foundational text of Daoism: a Chinese philosophical and religious tradition centered around following the “dao,” or “the way” of nature. The general consensus of modern scholarship, however, is that the Daodejing reflects the work of generations of thinkers and editors, and that even the name “Laozi” embodies ideas developed over centuries.

‘Laozi Riding an Ox,’ by Zhang Lu (15th-16th century). National Palace Museum via Wikimedia Commons

Most scholars date the composition of the Daodejing to China’s Warring States period, from 475-221 B.C.E. It was a time of tremendous technological, economic and political change, when competitions for status played out on the battlefield. Given this historical context, it’s little surprise that much of the text’s musings are devoted to status-chasing and the dark side of human desire.

For example, the Daodejing criticizes the ruling class and its talent-recruitment system for dangling enticing status markers that could never be fully achieved. Dreaming of prestige could feel like a full assault on the senses, as captured in Ken Liu’s luminous translation:

“A profusion of colors blinds the eye.

A cacophony of noises deafens the ear.

A flood of flavors numbs the tongue.

Rushing and chasing, the mind becomes unsettled.

Craving and desiring, the heart loses itself on crooked paths.”

The Daodejing may be an ancient text, but part of its enduring appeal is its timelessness. Through Liu’s prose, we can easily imagine Laozi critiquing today’s profusion of college influencer videos, a cacaphony of Reddit threads trumpeting admissions strategies, and high school students rushing and chasing after a stacked resume.

Laozi sees plainly the Sisyphean nature of achieving: that it inevitably leads to desiring more. He offers a stark warning: “The more you desire, the more it costs. / The more you hoard, the more you’ll waste.”

Critically, as the philosopher Curie Virágargues, Laozi isn’t suggesting that people abandon desire altogether. Rather, our truest desires can only be uncovered when we’ve freed ourselves from those imposed by society. And it’s the satisfaction of these true desires that can lead to contentment.

Deeper questions

In my research team’s ongoing study with Chinese-American parents and adolescents, we present a phrase encapsulating one of the core teachings of the Daodejing: that contentment – knowing or mastering satisfaction – leads to happiness. We then ask parents to explain to their child what they think it means and whether or not they agree.

Most parents are familiar with the phrase. Some endorse it, while others add caveats. Being content is different from being lazy, some emphasize; it’s not an excuse to stop striving. Many struggle to articulate the distinctions between contentment, laziness and healthy ambition – and as a psychologist, I admit that I’m right there with them.

I want Laozi to provide a clear definition for contentment, and even better, a formula for how to find it. But the Daodejing is more descriptive than prescriptive – less how-to and more what is. In Liu’s description, the text is Laozi’s invitation into a conversation, and it allows our deepest questions to come to the surface. Beneath the race for rank and status, what is it that we actually desire, and how do we find it?

These are difficult questions for any parent to answer. But if we’re willing to start the conversation, we can begin by asking them first of ourselves.

Carolina Rossini: Why lawsuit involving Instagram is so important

From The Conversation (not including image above.)

Carolina Rossini is a professor of practice and director of the Public Interest Technology Initiative at the University of Massachusetts at Amherst.

She was a staffer at organizations including the Electronic Frontier Foundation, Public Knowledge, and the Harvard Berkman Klein Center, which were funded by various foundations and companies. See https://www.carolinarossini.net/bio

A Los Angeles courtroom is hosting what may become the most consequential legal challenge Big Tech has ever faced.

This is an inflection point in the global debate over Big Tech liability: For the first time, an American jury is being asked to decide whether platform design itself can give rise to product liability – not because of what users post on them, but because of how they were built.

As a technology policy and law scholar, I believe that the decision, whatever the outcome, will likely generate a powerful domino effect in the United States and across jurisdictions worldwide.

The case

The plaintiff is a 20-year-old California woman identified by her initials, K.G.M. She said she began using YouTube around age 6 and created an Instagram account at age 9. Her lawsuit and testimony allege that the platforms’ design features, which include likes, algorithmic recommendation engines, infinite scroll, autoplay and deliberately unpredictable rewards, got her addicted. The suit alleges that her addiction fueled depression, anxiety, body dysmorphia – when someone see themselves as ugly or disfigured when they aren’t – and suicidal thoughts.

TikTok and Snapchat settled with K.G.M. before trial for undisclosed sums, leaving Meta and Google as the remaining defendants. Meta CEO Mark Zuckerberg testified before the jury on Feb. 18, 2026.

Meta CEO Mark Zuckerberg testified in court in a lawsuit alleging that Instagram is addictive by design.

The stakes extend far beyond one plaintiff. K.G.M.’s case is a bellwether trial, meaning the court chose it as a representative test case to help determine verdicts across all connected cases. Those cases involve approximately 1,600 plaintiffs, including more than 350 families and over 250 school districts. Their claims have been consolidated in a California Judicial Council Coordination Proceeding, No. 5255.

The California proceeding shares legal teams and evidence pool, including internal Meta documents, with a federal multidistrict litigation that is scheduled to advance in court later this year, bringing together thousands of federal lawsuits.

Legal innovation: Design as defect

For decades, Section 230 of the Communications Decency Act shielded technology companies from liability for content that their users post. Whenever people sued over harms linked to social media, companies invoked Section 230, and the cases typically died early.

The K.G.M. litigation uses a different legal strategy: negligence-based product liability. The plaintiffs argue that the harm arises not from third-party content but from the platforms’ own engineering and design decisions, the “informational architecture” and features that shape users’ experience of content. Infinite scrolling, autoplay, notifications calibrated to heighten anxiety and variable-reward systems operate on the same behavioral principles as slot machines.

These are conscious product design choices, and the plaintiffs contend they should be subject to the same safety obligations as any other manufactured product, thereby holding their makers accountable for negligence, strict liability or breach of warranty of fitness.

Judge Carolyn Kuhl of the California Superior Court agreed that these claims warranted a jury trial. In her Nov. 5, 2025, ruling denying Meta’s motion for summary judgment, she distinguished between features related to content publishing, which Section 230 might protect, and features like notification timing, engagement loops and the absence of meaningful parental controls, which it might not.

Here, Kuhl established that the conduct-versus-content distinction – treating algorithmic design choices as the company’s own conduct rather than as the protected publication of third-party speech – was a viable legal theory for a jury to evaluate. This fine-grained approach, evaluating each design feature individually and recognizing the increased complexities of technology products’ design, represents a potential road map for courts nationwide

.

What the companies knew

The product liability theory depends partly on what companies knew about the risks of their designs. The 2021 leak of internal Meta documents, widely known as the “Facebook Papers,” revealed that the company’s own researchers had flagged concerns about Instagram’s effects on adolescent body image and mental health.

Internal communications disclosed in the K.G.M. proceedings have included exchanges among Meta employees comparing the platform’s effects to pushing drugs and gambling. Whether this internal awareness constitutes the kind of corporate knowledge that supports liability is a central factual question for the jury to decide.

Tobacco companies were eventually held to account because what they knew – and hid – about the addictiveness of their products came to light. Ray Lustig/The Washington Post via Getty Images

There is a clear analogy to tobacco litigation. In the 1990s, plaintiffs succeeded against tobacco companies by proving they had concealed evidence about the addictive and deadly nature of their products. In K.G.M., the plaintiffs here are making the same core argument: Where there is corporate knowledge, deliberate targeting and public denial, liability follows.

K.G.M.’s lead trial attorney, Mark Lanier, is the same lawyer who won multibillion-dollar verdicts in the Johnson & Johnson baby powder litigation, signaling the scale of accountability they are pursuing.

The science: Contested but consequential

The scientific evidence on social media and youth mental health is real but genuinely complex. The Diagnostic and Statistical Manual of Mental Disorders (DSM-5) does not classify social media use as an addictive disorder. Researchers like Amy Orben have found that large-scale studies show small average associations between social media use and reduced well-being.

Yet Orben herself has cautioned that these averages might mask severe harms experienced by a subset of vulnerable young users, particularly girls ages 12 to 15. The legal question under the negligence theory is not whether social media harms everyone equally, but whether platform designers had an obligation to account for foreseeable interactions between their design features and the vulnerabilities of developing minds, especially when internal evidence suggested they were aware of the risks.

First, a manufacturer has a duty to exercise reasonable care in designing its product, and that duty extends to harms that are reasonably foreseeable. Second, the plaintiff must show that the type of injury suffered was a foreseeable consequence of the design choice. The manufacturer doesn’t need to have foreseen the exact injury to the exact plaintiff, but the general category of harm must have been within the range of what a reasonable designer would anticipate.

This is why the Facebook Papers and internal Meta research are so legally significant in K.G.M.’s case: They go directly to establishing that the company’s own researchers identified the specific categories of harm – depression, body dysmorphia, compulsive use patterns among adolescent girls – that the plaintiff alleges she suffered. If the company’s own data flagged these risks and leadership continued on the same design trajectory, that would considerably strengthen the foreseeability element.

Why it matters

Even if the science is unsettled, the legal and policy landscape is shifting fast. In 2025 alone, 20 states in the U.S. enacted new laws governing children’s social media use. And this wave is not only in the U.S.; countries such as the U.K., Australia, Denmark, France and Brazil are also moving forward with specific legislation, including mandates banning social media for those under 16.

The K.G.M. trial represents something more fundamental: the proposition that algorithmic design decisions are product decisions, carrying real obligations of safety and accountability. If this framework takes hold, every platform will need to reconsider not just what content appears, but why and how it is delivered.

Former U.S. diplomat: Don’t expect regime change in Iran

After the largest buildup of U.S. warships and aircraft in the Middle East in decades, American and Israeli military forces launched a massive assault on Iran on Feb. 28, 2026.

President Donald Trump has called the attacks “major combat operations” and has urged regime change in Tehran. Supreme Leader Ayatollah Ali Khamenei was killed in the strikes.

To better understand what this means for the U.S. and Iran, Alfonso Serrano, a U.S. politics editor at The Conversation, interviewed Donald Heflin, a veteran diplomat who now teaches at Tufts University’s Fletcher School.

MEDFORD, Mass.

Regime change is going to be difficult. We heard Trump call for the Iranian people to bring the government down. In the first place, that’s difficult. It’s hard for people with no arms in their hands to bring down a very tightly controlled regime that has a lot of arms.

The second point is that U.S. history in that area of the world is not good with this. You may recall that during the Gulf War of 1990-1991, the U.S. basically encouraged the Iraqi people to rise up, and then made its own decision not to attack Baghdad, to stop short. And that has not been forgotten in Iraq or surrounding countries. I would be surprised if we saw a popular uprising in Iran that really had a chance of bringing the regime down.

A group of men wave Iranian flags as they protest U.S. and Israeli strikes in Tehran, Iran, on Feb. 28, 2026. AP Photo/Vahid Salemi

Do you see the possibility of U.S. troops on the ground to bring about regime change?

I will stick my neck out here and say that’s not going to happen. I mean, there may be some small special forces sent in. That’ll be kept quiet for a while. But as far as large numbers of U.S. troops, no, I don’t think it’s going to happen.

Two reasons. First off, any president would feel that was extremely risky. Iran’s a big country with a big military. The risks you would be taking are large amounts of casualties, and you may not succeed in what you’re trying to do.

But Trump, in particular, despite the military strike against Iran and the one against Venezuela, is not a big fan of big military interventions and war. He’s a guy who will send in fighter planes and small special forces units, but not 10,000 or 20,000 troops.

And the reason for that is, throughout his career, he does well with a little bit of chaos. He doesn’t mind creating a little bit of chaos and figuring out a way to make a profit on the other side of that. War is too much chaos. It’s really hard to predict what the outcome is going to be, what all the ramifications are going to be. Throughout his first term and the first year of his second term, he has shown no inclination to send ground troops anywhere.

Speaking of President Trump, what are the risks he faces?

One risk is going on right now, which is that the Iranians may get lucky or smart and manage to attack a really good target and kill a lot of people, like something in Jerusalem or Tel Aviv or a U.S. military base.

The second risk is that the attacks don’t work, the basic political leadership of Iran survives, and the U.S. winds up with egg on its face.

The third risk is that it works to a certain extent. You take out the top people, but then who steps into their shoes? I mean, go back and look at Venezuela. Most people would have thought that who was going to wind up winning at the end of that was the head of the opposition. But it wound up being the vice president of the old regime, Delcy Rodríguez.

I can see a similar scenario in Iran. The regime has enough depth to survive the death of several of its leaders. The thing to watch will be who winds up in the top jobs, hardliners or realists. But the only institution in Iran strong enough to succeed them is the army, the Revolutionary Guards in particular. Would that be an improvement for the U.S.? It depends on what their attitude was. The same attitude that the vice president of Venezuela has been taking, which is, “Look, this is a fact of life. We better negotiate with the Americans and figure out some way forward we can both live with.”

But these guys are pretty hardcore revolutionaries. I mean, Iran has been under revolutionary leadership for 47 years. All these guys are true believers. I don’t know if we’ll be able to work with them.

Smoke rises over Tehran on Feb. 28, 2026, after the U.S. and Israel launched airstrikes on Iran. Fatemeh Bahrami/Anadolu via Getty Images

Any last thoughts?

I think that the timing is interesting. If you go back to last year, Trump, after being in office a little and watching the situation between Israel and Gaza, was given an opening, when Israeli Prime Minister Benjamin Netanyahu attacked Qatar.

A lot of conservative Mideast regimes, who didn’t have a huge problem with Israel, essentially said “That’s going too far.” And Trump was able to use that as an excuse. He was able to essentially say, “Okay, you’ve gone too far. You’re really taking risk with world peace. Everybody’s gonna sit at the table.”

I think the same thing’s happening here. I believe many countries would love to see regime change in Iran. But you can’t go into the country and say, “We don’t like the political leadership being elected. We’re going to get rid of them for you.” What often happens in that situation is people begin to rally around the flag. They begin to rally around the government when the bombs start falling.

But in the last few months, we’ve seen a huge human rights crackdown in Iran. We may never know the number of people the Iranian regime killed in the last few months, but 10,000 to 15,000 protesters seems a minimum.

That’s the excuse Trump can use. You can sell it to the Iranian people and say, “Look, they’re killing you in the streets. Forget about your problems with Israel and the U.S. and everything. They’re real, but you’re getting killed in the streets, and that’s why we’re intervening.” It’s a bit of a fig leaf.

Now, as I said earlier, the problem with this is if your next line is, “You know, we’re going to really soften this regime up with bombs; now it’s your time to go out in the streets and bring the regime down.” I may eat these words, but I don’t think that’s going to happen. The regime is just too strong for it to be brought down by bare hands.

This article was updated on Feb 28, 2026, to include confirmation of Ayatollah Ali Khamenei’s death.

Take a few minutes for YOU

Fritz Holznagel here, quizmaster for The Conversation. If

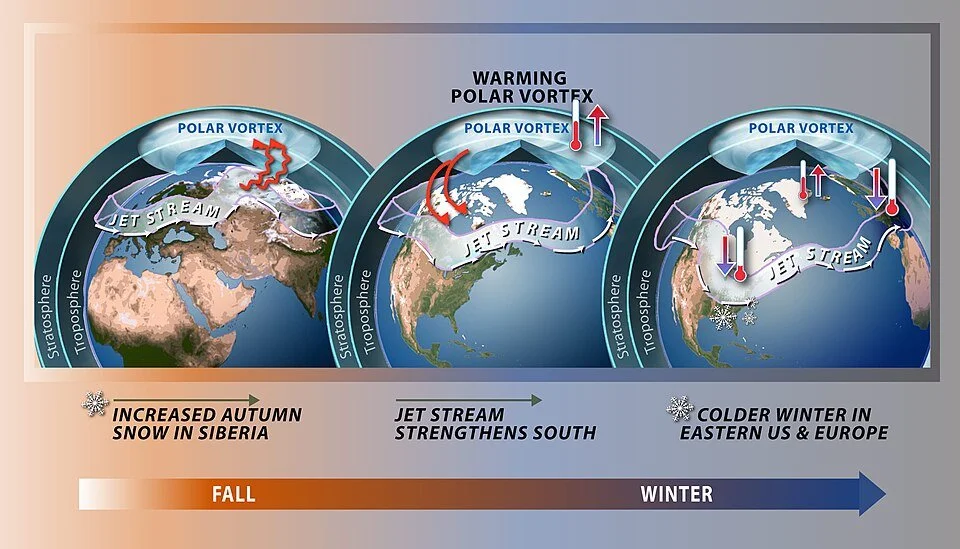

Mathew Barlow/Judah Cohen: How polar vortex from the warming Arctic and warm ocean intensified our big winter storm

From The Conversation (except for image above)

Mathew Barlow is a professor of climate science at the University of Massachusetts at Lowell.

Judah Cohen is a climate science at the Massachusetts Institute of Technology

Mathew Barlow has received federal funding for research on extreme events and also conducts legal consulting related to climate change.

Judah Cohen does not work for, consult, own shares in or receive funding from any company or organization that would benefit from this article, and has disclosed no relevant affiliations beyond their academic appointment.

A severe winter storm that brought crippling freezing rain, sleet and snow to a large part of the U.S. in late January 2026 left a mess in states from New Mexico to New England. Hundreds of thousands of people lost power across the South as ice pulled down tree branches and power lines, more than a foot of snow fell in parts of the Midwest and Northeast, and many states faced bitter cold that was expected to linger for days.

The sudden blast may have come as a shock to many Americans after a mostly mild start to winter in many places in the nation, but that warmth may have partly contributed to the ferocity of the storm.

As atmospheric and climate scientists, we conduct research that aims to improve understanding of extreme weather, including what makes it more or less likely to occur and how climate change might or might not play a role.

To understand what Americans are experiencing with this winter blast, we need to look more than 20 miles above the surface of Earth, to the stratospheric polar vortex.

A forecast for Jan. 26, 2026, shows the freezing line in white reaching far into Texas. The light band with arrows indicates the jet stream, and the dark band indicates the stratospheric polar vortex. The jet stream is shown at about 3.5 miles above the surface, a typical height for tracking storm systems. The polar vortex is approximately 20 miles above the surface. Mathew Barlow, CC BY

What creates a severe winter storm like this?

Multiple weather factors have to come together to produce such a large and severe storm.

Winter storms typically develop where there are sharp temperature contrasts near the surface and a southward dip in the jet stream, the narrow band of fast-moving air that steers weather systems. If there is a substantial source of moisture, the storms can produce heavy rain or snow.

In late January, a strong Arctic air mass from the north was creating the temperature contrast with warmer air from the south. Multiple disturbances within the jet stream were acting together to create favorable conditions for precipitation, and the storm system was able to pull moisture from the very warm Gulf of Mexico.

The National Weather Service issued severe storm warnings (pink) on Jan. 24, 2026, for a large swath of the U.S. that could see sleet and heavy snow over the following days, along with ice storm warnings (dark purple) in several states and extreme cold warnings (dark blue). National Weather Service

Where does polar vortex come in?

The fastest winds of the jet stream occur just below the top of the troposphere, which is the lowest level of the atmosphere and ends about seven miles above Earth’s surface. Weather systems are capped at the top of the troposphere, because the atmosphere above it becomes very stable.

The stratosphere is the next layer up, from about seven miles to about 30 miles. While the stratosphere extends high above weather systems, it can still interact with them through atmospheric waves that move up and down in the atmosphere. These waves are similar to the waves in the jet stream that cause it to dip southward, but they move vertically instead of horizontally.

A chart shows how temperatures in the lower layers of the atmosphere change between the troposphere and stratosphere. Miles are on the right, kilometers on the left. NOAA

You’ve probably heard the term “polar vortex” used when an area of cold Arctic air moves far enough southward to influence the United States. That term describes air circulating around the pole, but it can refer to two different circulations, one in the troposphere and one in the stratosphere.

The Northern Hemisphere stratospheric polar vortex is a belt of fast-moving air circulating around the North Pole. It is like a second jet stream, high above the one you may be familiar with from weather graphics, and usually less wavy and closer to the pole.

Sometimes the stratospheric polar vortex can stretch southward over the United States. When that happens, it creates ideal conditions for the up-and-down movement of waves that connect the stratosphere with severe winter weather at the surface.

A stretched stratospheric polar vortex reflects upward waves back down, left, which affects the jet stream and surface weather, right. Mathew Barlow and Judah Cohen, CC BY

The forecast for the January storm showed a close overlap between the southward stretch of the stratospheric polar vortex and the jet stream over the U.S., indicating perfect conditions for cold and snow.

The biggest swings in the jet stream are associated with the most energy. Under the right conditions, that energy can bounce off the polar vortex back down into the troposphere, exaggerating the north-south swings of the jet stream across North America and making severe winter weather more likely.

This is what was happening in late January 2026 in the central and eastern U.S.

If climate is warming, why are we still getting severe winter storms?

Earth is unequivocally warming as human activities release greenhouse- gas emissions that trap heat in the atmosphere, and snow amounts are decreasing overall. But that does not mean severe winter weather will never happen again.

Some research suggests that even in a warming environment, cold events, while occurring less frequently, may still remain relatively severe in some locations.

One factor may be increasing disruptions to the stratospheric polar vortex, which appear to be linked to the rapid warming of the Arctic with climate change.

The polar vortex is a strong band of winds in the stratosphere, normally ringing the North Pole. When it weakens, it can split. The polar jet stream can mirror this upheaval, becoming weaker or wavy. At the surface, cold air is pushed southward in some locations. NOAA

Additionally, a warmer ocean leads to more evaporation, and because a warmer atmosphere can hold more moisture, that means more moisture is available for storms. The process of moisture condensing into rain or snow produces energy for storms as well. However, warming can also reduce the strength of storms by reducing temperature contrasts.

The opposing effects make it complicated to assess the potential change to average storm strength. However, intense events do not necessarily change in the same way as average events. On balance, it appears that the most intense winter storms may be becoming more intense.

A warmer environment also increases the likelihood that precipitation that would have fallen as snow in previous winters may now be more likely to fall as sleet and freezing rain.

Still many questions

Scientists are constantly improving the ability to predict and respond to these severe weather events, but there are many questions still to answer.

Much of the data and research in the field relies on a foundation of work by federal employees, including government labs like the National Center for Atmospheric Research, known as NCAR, which has been targeted by the Trump administration for funding cuts. These scientists help develop the crucial models, measuring instruments and data that scientists and forecasters everywhere depend on.

How melting ice sheets affect different coasts

Debris washed ashore for many miles to the south of the two collapsed houses in Rodanthe, N.C., on the Outer Banks. Sea levels are rising fast on the U.S.East Coast.

— Photo by National Park Service

The famous story of King Canute and the tides.

Article is from The Conversation (but not picture above)

Shaina Sadai, an associate in earth science in the Five College Consortium, is an assistant professor of geosciences at the University of Rhode Island and Ambarish Karmalkar is an assistant professor of geosciences at the University of Rhode Island.

Shaina Sadai has received funding from the National Science Foundation and the Hitz Family Foundation.

Ambarish Karmalkar receives funding from National Science Foundation.

KINGSTON, R.I.

When polar ice sheets melt, the effects ripple across the world. The melting ice raises average global sea level, alters ocean currents and affects temperatures in places far from the poles.

But melting ice sheets don’t affect sea level and temperatures in the same way everywhere.

In a new study, our team of scientists investigated how ice melting in Antarctica affects global climate and sea level. We combined computer models of the Antarctic ice sheet, solid Earth and global climate, including atmospheric and oceanic processes, to explore the complex interactions that melting ice has with other parts of the Earth.

Understanding what happens to Antarctica’s ice matters, because it holds enough frozen water to raise average sea level by about 190 feet (58 meters). As the ice melts, it becomes an existential problem for people and ecosystems in island and coastal communities.

Changes in Antarctica

The extent to which the Antarctic ice sheet melts will depend on how much the Earth warms. And that depends on future greenhouse gas emissions from sources including vehicles, power plants and industries.

Studies suggest that much of the Antarctic ice sheet could survive if countries reduce their greenhouse gas emissions in line with the 2015 Paris Agreement goal to keep global warming to 1.5 degrees Celsius (2.7 Fahrenheit) compared to before the industrial era. However, if emissions continue rising and the atmosphere and oceans warm much more, that could cause substantial melting and much higher sea levels.

Our research shows that high emissions pose risks not just to the stability of the West Antarctic ice sheet, which is already contributing to sea-level rise, but also for the much larger and more stable East Antarctic ice sheet.

It also shows how different regions of the world will experience different levels of sea-level rise as Antarctica melts.

Understanding sea-level change

If sea levels rose like the water in a bathtub, then as ice sheets melt, the ocean would rise by the same amount everywhere. But that isn’t what happens.

Instead, many places experience higher regional sea-level rise than the global average, while places close to the ice sheet can even see sea levels drop. The main reason has to do with gravity.

Gravity is determined by mass, and Earth’s mass is not distributed equally.

Ice sheets are massive, and that mass creates a strong gravitational pull that attracts the surrounding ocean water toward them, similar to how the gravitational pull between Earth and the Moon affects the tides.

As the ice sheet shrinks, its gravitational pull on the ocean declines, leading to sea levels falling in regions close to the ice sheet coast and rising farther away. But sea-level changes are not only a function of distance from the melting ice sheet. This ice loss also changes how the planet rotates. The rotation axis is pulled toward that missing ice mass, which in turn redistributes water around the globe.

2 factors that can slow melting

As the massive ice sheet melts, the solid Earth beneath it rebounds.

Underneath the bedrock of Antarctica is Earth’s mantle, which flows slowly like maple syrup. The more the ice sheet melts, the less it presses down on the solid Earth. With less weight on it, the bedrock can rebound. This can lift parts of the ice sheet out of contact with warming ocean waters, slowing the rate of melting. This happens quicker in places where the mantle flows faster, such as underneath the West Antarctic ice sheet.

This rebound effect could help preserve the ice sheet – if global greenhouse-gas emissions are kept low.

Another factor that can slow melting might seem counterintuitive.

While Antarctic meltwater drives rising sea levels, models show it also delays greenhouse gas-induced warming. That’s because icy meltwater from Antarctica reduces ocean surface temperatures in the Southern Hemisphere and tropical Pacific, trapping heat in the deep ocean and slowing the rise of global average air temperature.

But as melting occurs, even if it slows, sea levels rise.

Mapping our sea-level results

We combined computer models that simulate these and other behaviors of the Antarctic ice sheet, solid Earth and climate to understand what could happen to sea level around the world as global temperatures rise and ice melts.

For example, in a moderate scenario in which the world reduces greenhouse gas emissions, though not enough to keep global warming under 2 degrees Celsius (3.6 Fahrenheit) in 2100, we found the average sea-level rise from Antarctic ice melt would be about 4 inches (0.1 meters) by 2100. By 2200, it would be more than 3.3 feet (1 meter).

Keep in mind that this is only sea-level rise caused by Antarctic melt. The Greenland ice sheet and thermal expansion of seawater as the oceans warm will also raise sea levels. Current estimates suggest that total average sea-level rise – including Greenland and thermal expansion – would be 1 to 2 feet (0.32 to 0.63 meters) by 2100 under the same scenario.

Models show Antarctica’s contribution to sea-level rise in 2200 under medium (top) and high (bottom) emissions. The global mean sea-level rise is in purple. Regionally higher than average sea-level rise appears in dark blue. Sadai et al., 2025

We also show how sea-level rise from Antarctica varies around the world.

In that moderate emissions scenario, we found the highest sea-level rise from Antarctic ice melt alone, up to 5 feet (1.5 meters) by 2200, occurs in the Indian, Pacific and western Atlantic ocean basins – places far from Antarctica.

These regions are home to many people in low-lying coastal areas, including residents of island nations in the Caribbean, such as Jamaica, and the central Pacific, such as the Marshall Islands, that are already experiencing detrimental impacts from rising seas.

Under a high emissions scenario, we found the average sea-level rise caused by Antarctic melting would be much higher: about 1 foot (0.3 meters) in 2100 and close to 10 feet (more than 3 meters) in 2200.

Under this scenario, a broader swath of the Pacific Ocean basin north of the equator, including Micronesia and Palau, and across the middle of the Atlantic Ocean basin would see the highest sea-level rise, up to 4.3 meters (14 feet) by 2200, just from Antarctica.

Although these sea-level rise numbers seem alarming, the world’s current emissions and recent projections suggest this very high emissions scenario is unlikely. This exercise, however, highlights the serious consequences of high emissions and underscores the importance of reducing emissions.

The takeaway

These impacts have implications for climate justice, particularly for island nations that have done little to contribute to climate change yet already experience the devastating impacts of sea-level rise.

Many island nations are already losing land to sea-level rise, and they have been leading global efforts to minimize temperature rise. Protecting these countries and other coastal areas will require reducing greenhouse gas emissions faster than nations are committing to do today.

Stephanie Otts: How N.E. fishing industry has changed since ‘The Perfect Storm’ film

From The Conversation (except for image above)

Stephanie Otts is director of the National Sea Grant Law Center, at the University of Mississippi.

She receives funding from the NOAA National Sea Grant College Program through the U.S. Department of Commerce. Previous support for her fisheries management legal research was provided by The Nature Conservancy.

Twenty-five years ago, The Perfect Storm roared into movie theaters. The disaster flick, starring George Clooney and Mark Wahlberg, was a riveting, fictionalized account of commercial swordfishing in New England and a crew who went down in a storm.

The anniversary of the film’s release, on June 30, 2000, provides an opportunity to reflect on the real-life changes to New England’s commercial fishing industry.

Fishing was once more open to all

In the true story behind the movie, six men lost their lives in late October 1991 when the commercial swordfishing vessel Andrea Gail disappeared in a fierce storm in the North Atlantic as it was headed home to Gloucester, Massachusetts.

At the time, and until very recently, almost all commercial fisheries were open access, meaning there were no restrictions on who could fish.

There were permit requirements and regulations about where, when and how you could fish, but anyone with the means to purchase a boat and associated permits, gear, bait and fuel could enter the fishery. Eight regional councils established under a 1976 federal law to manage fisheries around the U.S. determined how many fish could be harvested prior to the start of each fishing season.

Fishing has been an integral part of coastal New England culture since its towns were established. In this 1899 photo, a New England community weighs and packs mackerel. Charles Stevenson/Freshwater and Marine Image Bank

Fishing started when the season opened and continued until the catch limit was reached. In some fisheries, this resulted in a “race to the fish” or a “derby,” where vessels competed aggressively to harvest the available catch in short amounts of time. The limit could be reached in a single day, as happened in the Pacific halibut fishery in the late 1980s.

By the 1990s, however, open access systems were coming under increased criticism from economists as concerns about overfishing rose.

The fish catch peaked in New England in 1987 and would remain far above what the fish population could sustain for two more decades. Years of overfishing led to the collapse of fish stocks, including North Atlantic cod in 1992 and Pacific sardine in 2015.

As populations declined, managers responded by cutting catch limits to allow more fish to survive and reproduce. Fishing seasons were shortened, as it took less time for the fleets to harvest the allowed catch. It became increasingly hard for fishermen to catch enough fish to earn a living.

Saving fisheries changed the industry

In the early 2000s, as these economic and environmental challenges grew, fisheries managers started limiting access. Instead of allowing anyone to fish, only vessels or individuals meeting certain eligibility requirements would have the right to fish.

The most common method of limiting access in the U.S. is through limited entry permits, initially awarded to individuals or vessels based on previous participation or success in the fishery. Another approach is to assign individual harvest quotas or “catch shares” to permit holders, limiting how much each boat can bring in.

In 2007, Congress amended the 1976 Magnuson-Stevens Fishery Conservation and Management Act to promote the use of limited access programs in U.S. fisheries.

Ships in the fleet out of New Bedford, Mass. Henry Zbyszynski/Flickr, CC BY

Today, limited access is common, and there are positive signs that the management change is helping achieve the law’s environmental goal of preventing overfishing. Since 2000, the populations of 50 major fishing stocks have been rebuilt, meaning they have recovered to a level that can once again support fishing.

I’ve been following the changes as a lawyer focused on ocean and coastal issues, and I see much work still to be done.

Forty fish stocks are currently being managed under rebuilding plans that limit catch to allow the stock to grow, including Atlantic cod, which has struggled to recover due to a complex combination of factors, including climatic changes.

The lingering effect on communities today

While many fish stocks have recovered, the effort came at an economic cost to many individual fishermen. The limited-access Northeast groundfish fishery, which includes Atlantic cod, haddock and flounder, shed nearly 800 crew positions between 2007 and 2015.

The loss of jobs and revenue from fishing impacts individual family income and relationships, strains other businesses in fishing communities, and affects those communities’ overall identity and resilience, as illustrated by a recent economic snapshot of the Alaska seafood industry.

When original limited-access permit holders leave the business – for economic, personal or other reasons – their permits are either terminated or sold to other eligible permit holders, leading to fewer active vessels in the fleet. As a result, the number of vessels fishing for groundfish has declined from 719 in 2007 to 194 in 2023, meaning fewer jobs.

A fisherman unloads a portion of his catch for the day of 300 pounds of groundfish, including flounder, in January 2006 in Gloucester, Mass. AP Photo/Lisa Poole

Because of their scarcity, limited-access permits can cost upward of US$500,000, which is often beyond the financial means of a small businesses or a young person seeking to enter the industry. The high prices may also lead retiring fishermen to sell their permits, as opposed to passing them along with the vessels to the next generation.

These economic forces have significantly altered the fishing industry, leading to more corporate and investor ownership, rather than the family-owned operations that were more common in the Andrea Gail’s time.

Similar to the experience of small family farms, fishing captains and crews are being pushed into corporate arrangements that reduce their autonomy and revenues.

Consolidation can threaten the future of entire fleets, as New Bedford, Massachusetts, saw when Blue Harvest Fisheries, backed by a private equity firm, bought up vessels and other assets and then declared bankruptcy a few years later, leaving a smaller fleet and some local business and fishermen unpaid for their work. A company with local connections bought eight vessels from Blue Harvest along with 48 state and federal permits the company held.

New challenges and unchanging risks

While there are signs of recovery for New England’s fisheries, challenges continue.

Warming water temperatures have shifted the distribution of some species, affecting where and when fish are harvested. For example, lobsters have moved north toward Canada. When vessels need to travel farther to find fish, that increases fuel and supply costs and time away from home.

Fisheries managers will need to continue to adapt to keep New England’s fisheries healthy and productive.

One thing that, unfortunately, hasn’t changed is the dangerous nature of the occupation. Between 2000 and 2019, 414 fishermen died in 245 disasters.

When the Feds built nice housing for working-class people

Plans and elevations of typical U.S. Housing Corporation housing.

From The Conversation, except for image above

Eran Ben-Joseph is a professor of landscape architecture and urban planning at the Massachusetts Institute of Technology (MIT), in Cambridge, Mass.

He does not work for, consult, own shares in or receive funding from any company or organization that would benefit from this article, and has disclosed no relevant affiliations beyond his academic appointment.

In 1918, as World War I intensified overseas, the U.S. government embarked on a radical experiment: It quietly became the nation’s largest housing developer, designing and constructing more than 80 new communities across 26 states in just two years.

These weren’t hastily erected barracks or rows of identical homes. They were thoughtfully designed neighborhoods, complete with parks, schools, shops and sewer systems.

In just two years, this federal initiative provided housing for almost 100,000 people.

Few Americans are aware that such an ambitious and comprehensive public housing effort ever took place. Many of the homes are still standing today.

But as an urban planning scholar, I believe that this brief historic moment – spearheaded by a shuttered agency called the United States Housing Corporation – offers a revealing lesson on what government-led planning can achieve during a time of national need.

Government mobilization

When the U.S. declared war against Germany in April 1917, federal authorities immediately realized that ship, vehicle and arms manufacturing would be at the heart of the war effort. To meet demand, there needed to be sufficient worker housing near shipyards, munitions plants and steel factories.

So on May 16, 1918, Congress authorized President Woodrow Wilson to provide housing and infrastructure for industrial workers vital to national defense. By July, it had appropriated $100 million – about $2.3 billion today – for the effort, with Secretary of Labor William B. Wilson tasked with overseeing it via the U.S. Housing Corporation.

Over the course of two years, the agency designed and planned over 80 housing projects. Some developments were small, consisting of a few dozen dwellings. Others approached the size of entire new towns.

For example, Cradock, near Norfolk, Va., was planned on a 310-acre site, with more than 800 detached homes developed on just 100 of those acres. In Dayton, Ohio, the agency created a 107-acre community that included 175 detached homes and a mix of over 600 semidetached homes and row houses, along with schools, shops, a community center and a park.

Designing ideal communities

Notably, the Housing Corporation was not simply committed to offering shelter.

Its architects, planners and engineers aimed to create communities that were not only functional but also livable and beautiful. They drew heavily from Britain’s late-19th century Garden City movement, a planning philosophy that emphasized low-density housing, the integration of open spaces and a balance between built and natural environments.

Importantly, instead of simply creating complexes of apartment units, akin to the public housing projects that most Americans associate with government-funded housing, the agency focused on the construction of single-family and small multifamily residential buildings that workers and their families could eventually own.

This approach reflected a belief by the policymakers that property ownership could strengthen community responsibility and social stability. During the war, the federal government rented these homes to workers at regulated rates designed to be fair, while covering maintenance costs. After the war, the government began selling the homes – often to the tenants living in them – through affordable installment plans that provided a practical path to ownership.

A single-family home in Davenport, Iowa, built by the U.S. Housing Corporation. National Archives

Though the scope of the Housing Corporation’s work was national, each planned community took into account regional growth and local architectural styles. Engineers often built streets that adapted to the natural landscape. They spaced houses apart to maximize light, air and privacy, with landscaped yards. No resident lived far from greenery.

In Quincy, Mass., for example, the agency built a 22-acre neighborhood with 236 homes designed mostly in a Colonial Revival style to serve the nearby Fore River Shipyard. The development was laid out to maximize views, green space and access to the waterfront, while maintaining density through compact street and lot design.

At Mare Island, Calif. developers located the housing site on a steep hillside near a naval base. Rather than flatten the land, designers worked with the slope, creating winding roads and terraced lots that preserved views and minimized erosion. The result was a 52-acre community with over 200 homes, many of which were designed in the Craftsman style. There was also a school, stores, parks and community centers.

Infrastructure and innovation

Alongside housing construction, the Housing Corporation invested in critical infrastructure. Engineers installed over 649,000 feet of modern sewer and water systems, ensuring that these new communities set a high standard for sanitation and public health.

Attention to detail extended inside the homes. Architects experimented with efficient interior layouts and space-saving furnishings, including foldaway beds and built-in kitchenettes. Some of these innovations came from private companies that saw the program as a platform to demonstrate new housing technologies.

One company, for example, designed fully furnished studio apartments with furniture that could be rotated or hidden, transforming a space from living room to bedroom to dining room throughout the day.

To manage the large scale of this effort, the agency developed and published a set of planning and design standards − the first of their kind in the United States. These manuals covered everything from block configurations and road widths to lighting fixtures and tree-planting guidelines.

The standards emphasized functionality, aesthetics and long-term livability.

Architects and planners who worked for the Housing Corporation carried these ideas into private practice, academia and housing initiatives. Many of the planning norms still used today, such as street hierarchies, lot setbacks and mixed-use zoning, were first tested in these wartime communities.

And many of the planners involved in experimental New Deal community projects, such as Greenbelt, Md., had worked for or alongside Housing Corporation designers and planners. Their influence is apparent in the layout and design of these communities.

A brief but lasting legacy

With the end of World War I, the political support for federal housing initiatives quickly waned. The Housing Corporation was dissolved by Congress, and many planned projects were never completed. Others were incorporated into existing towns and cities.

Yet, many of the neighborhoods built during this period still exist today, integrated in the fabric of the country’s cities and suburbs. Residents in places such as Aberdeen, Md.; Bremerton, Wash.; Bethlehem, Penn., Watertown, N.Y., and New Orleans may not even realize that many of the homes in their communities originated from a bold federal housing experiment.

These homes on Lawn Avenue in Quincy, Mass., in 2019 were built by the U.S. Housing Corporation. Google Street View

The Housing Corporation’s efforts, though brief, showed that large-scale public housing could be thoughtfully designed, community oriented and quickly executed. For a short time, in response to extraordinary circumstances, the U.S. government succeeded in building more than just houses. It constructed entire communities, demonstrating that government has a major role and can lead in finding appropriate, innovative solutions to complex challenges.

At a moment when the U.S. once again faces a housing crisis, the legacy of the U.S. Housing Corporation serves as a reminder that bold public action can meet urgent needs.

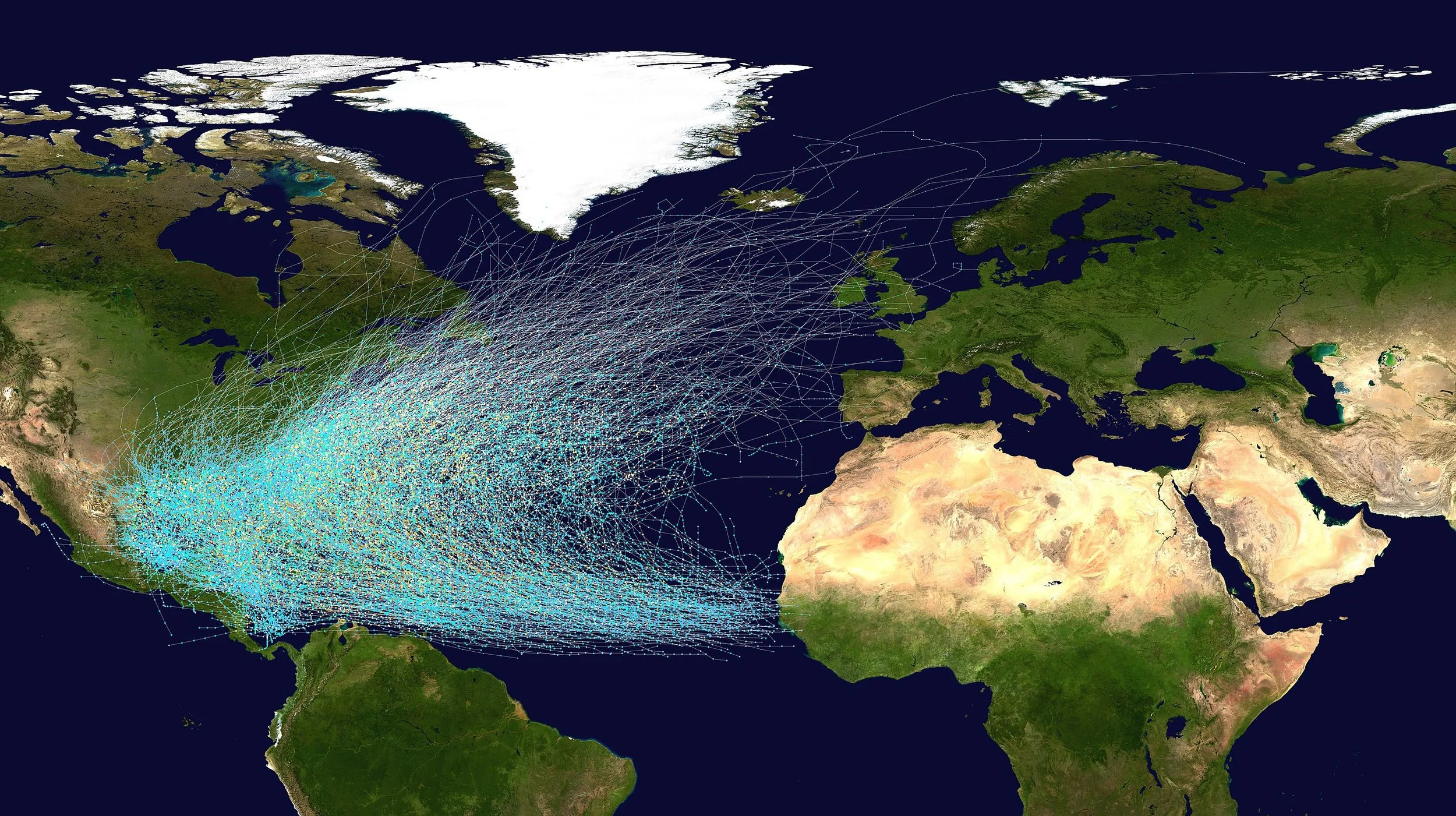

James L. Fitzsimmons: Of hurricanes and Huracan

Tracks of North Atlantic tropical cyclones 1851-2019

MIDDLEBURY, Vt.

The ancient Maya believed that everything in the universe, from the natural world to everyday experiences, was part of a single, powerful spiritual force. They were not polytheists who worshipped distinct gods but pantheists who believed that various gods were just manifestations of that force.

Some of the best evidence for this comes from the behavior of two of the most powerful beings of the Maya world: The first is a creator god whose name is still spoken by millions of people every fall – Huracán, or “Hurricane.” The second is a god of lightning, K'awiil, from the early first millennium C.E.

As a scholar of the Indigenous religions of the Americas, I recognize that these beings, though separated by over 1,000 years, are related and can teach us something about our relationship to the natural world.

Huracán, the ‘Heart of Sky’

Huracán was once a god of the K’iche’, one of the Maya peoples who today live in the southern highlands of Guatemala. He was one of the main characters of the Popol Vuh, a religious text from the 16th century. His name probably originated in the Caribbean, where other cultures used it to describe the destructive power of storms.

The K’iche’ associated Huracán, which means “one leg” in the K’iche’ language, with weather. He was also their primary god of creation and was responsible for all life on earth, including humans.

Because of this, he was sometimes known as U K'ux K'aj, or “Heart of Sky.” In the K'iche’ language, k'ux was not only the heart but also the spark of life, the source of all thought and imagination.

Yet, Huracán was not perfect. He made mistakes and occasionally destroyed his creations. He was also a jealous god who damaged humans so they would not be his equal. In one such episode, he is believed to have clouded their vision, thus preventing them from being able to see the universe as he saw it.

Huracán was one being who existed as three distinct persons: Thunderbolt Huracán, Youngest Thunderbolt and Sudden Thunderbolt. Each of them embodied different types of lightning, ranging from enormous bolts to small or sudden flashes of light.

Despite the fact that he was a god of lightning, there were no strict boundaries between his powers and the powers of other gods. Any of them might wield lightning, or create humanity, or destroy the Earth.

Another storm god

The Popol Vuh implies that gods could mix and match their powers at will, but other religious texts are more explicit. One thousand years before the Popol Vuh was written, there was a different version of Huracán called K'awiil. During the first millennium, people from southern Mexico to western Honduras venerated him as a god of agriculture, lightning and royalty.

The ancient Maya god K'awiil, left, had an ax or torch in his forehead as well as a snake in place of his right leg. K5164 from the Justin Kerr Maya archive, Dumbarton Oaks, Trustees for Harvard University, Washington, D.C., CC BY-SA

Illustrations of K'awiil can be found everywhere on Maya pottery and sculpture. He is almost human in many depictions: He has two arms, two legs and a head. But his forehead is the spark of life – and so it usually has something that produces sparks sticking out of it, such as a flint ax or a flaming torch. And one of his legs does not end in a foot. In its place is a snake with an open mouth, from which another being often emerges.

Indeed, rulers, and even gods, once performed ceremonies to K'awiil in order to try and summon other supernatural beings. As personified lightning, he was believed to create portals to other worlds, through which ancestors and gods might travel.

Representation of power

For the ancient Maya, lightning was raw power. It was basic to all creation and destruction. Because of this, the ancient Maya carved and painted many images of K'awiil. Scribes wrote about him as a kind of energy – as a god with “many faces,” or even as part of a triad similar to Huracán.

He was everywhere in ancient Maya art. But he was also never the focus. As raw power, he was used by others to achieve their ends.

Rain gods, for example, wielded him like an ax, creating sparks in seeds for agriculture. Conjurers summoned him, but mostly because they believed he could help them communicate with other creatures from other worlds. Rulers even carried scepters fashioned in his image during dances and processions.

Moreover, Maya artists always had K'awiil doing something or being used to make something happen. They believed that power was something you did, not something you had. Like a bolt of lightning, power was always shifting, always in motion.

An interdependent world

Because of this, the ancient Maya thought that reality was not static but ever-changing. There were no strict boundaries between space and time, the forces of nature or the animate and inanimate worlds.

Residents wade through a street flooded by Hurricane Helene, in Batabano, Mayabeque province, Cuba, on Sept. 26, 2024. AP Photo/Ramon Espinosa

Everything was malleable and interdependent. Theoretically, anything could become anything else – and everything was potentially a living being. Rulers could ritually turn themselves into gods. Sculptures could be hacked to death. Even natural features such as mountains were believed to be alive.

These ideas – common in pantheist societies – persist today in some communities in the Americas.

They were once mainstream, however, and were a part of K'iche’ religion 1,000 years later, in the time of Huracán. One of the lessons of the Popol Vuh, told during the episode where Huracán clouds human vision, is that the human perception of reality is an illusion.

The illusion is not that different things exist. Rather it is that they exist independent from one another. Huracán, in this sense, damaged himself by damaging his creations.

Hurricane season every year should remind us that human beings are not independent from nature but part of it. And like Hurácan, when we damage nature we damage ourselves.

James L. Fitzsimmons is a professor of anthropology at Middlebury College.

He does not work for, consult, own shares in or receive funding from any company or organization that would benefit from this article, and has disclosed no relevant affiliations beyond their academic appointment.

Jamie Hartmann-Boyce: Benefits of menthol-flavored e-cigarettes may outweigh the risks

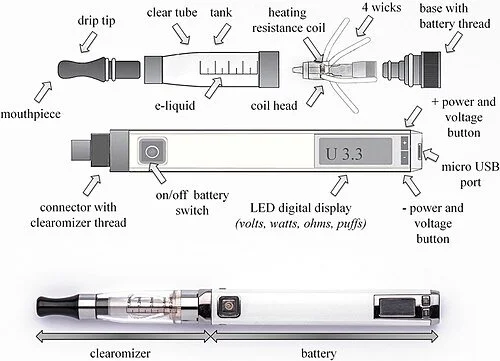

An e-cigarette with transparent clearomizer and changeable dual-coil head. This model allows for a wide range of settings.

On June 21, 2024, the U.S. Food and Drug Administration authorized the marketing of the first electronic cigarette products in flavors other than tobacco in the U.S. Of the four new authorized products, two are sealed, prefilled pods with menthol-flavored nicotine liquid that can be used in certain types of e-cigarettes. The other two are disposable nicotine e-cigarettes – meaning once the prefilled menthol liquid is used, the device cannot be used again.

The Conversation asked Jamie Hartmann-Boyce, a health-policy expert who specializes in tobacco control and e-cigarette products, to explain the pros and cons of the FDA’s authorization and what it could mean for vulnerable populations.

AMHERST, MASS.

E-cigarettes, also known as vapes, are hand-held, battery-operated devices that heat a liquid to form a vapor that can be inhaled. This vapor can be manufactured to include flavors. Unlike traditional cigarettes, e-cigarettes do not contain tobacco leaf. E-cigarettes can – but don’t always – contain nicotine.

Until June 21, the only nicotine e-cigarettes authorized for sale in the U.S. were tobacco-flavored. Some organizations, including some tobacco industry advocates, described this as a “de facto flavor ban.”

The Centers for Disease Control and Prevention defines menthol as a chemical compound found naturally in peppermint and other similar plants.

This is the first time the FDA has authorized marketing of an e-cigarette flavor other than tobacco. “Tobacco flavor” describes a range of flavors that are designed to taste similar to traditional cigarettes.

What are the potential harms, such as risks to kids?

Tobacco companies have historically added menthol to traditional cigarettes to make them seem less harsh and more appealing. Tobacco companies have aggressively marketed menthol cigarettes to Black people. In 2022, the FDA proposed a ban on menthol cigarettes based on their appeal, including to youth, and the potential of such a ban to improve health and prevent deaths. But the proposal has stalled.

Research shows that nontobacco, e-liquid flavors are more appealing than tobacco flavors, including to young people. The FDA has previously denied applications for menthol e-cigarettes, stating that the applications “did not present sufficient scientific evidence to show that the potential benefit to adult smokers outweighs the risks of youth initiation and use.”

How are e-cigarettes regulated in the U.S.?

In the U.S, e-cigarettes with nicotine fall under the authority of the FDA’s Center for Tobacco Products. For their products to be legally marketed and sold in the U.S., e-cigarette manufacturers must apply for marketing authorization from the FDA.

The FDA evaluates these applications based on the scientific evidence provided by the manufacturers. To be approved, the applications must demonstrate that permitting marketing of the products would be appropriate for protection of public health.

This means the FDA needs to weigh whether the potential benefits of the product – in other words, its ability to help adults quit smoking – outweigh its risks, including its appeal to youth. Though not risk-free, e-cigarettes are considered much less harmful than smoking. This means that adults who switch from smoking to vaping may benefit from improvements in their health.

The FDA’s authorization of menthol-flavored e-cigarettes underscores the growing body of evidence that vaping can reduce the harms of traditional smoking. But many experts are concerned that the new products will entice more young people and nonsmokers to begin vaping and smoking.

Weren’t flavored vapes already available in the U.S.?

Even though only tobacco e-liquids were authorized for sale before this new announcement, many Americans report using flavored e-liquids, with sweet, fruit and mint and menthol flavors being the most popular. This is in part because many vaping products available in the U.S. haven’t been authorized for marketing or sale. These are referred to as illicit products. In addition, some of the products currently available are still being reviewed by the FDA.

Many of the harms the public associates with vaping – such as the serious vaping-related lung injuries that were widely reported in 2019 and 2020 – have been linked to illicit products and the harmful chemicals some contain, which are not present in FDA-authorized products. Earlier in June, the Justice Department and FDA announced a federal multi-agency taskforce to curb distribution and sale of illegal e-cigarettes. Meanwhile, the U.S. is awash in sleek, colorful and highly potent vapes manufactured in China.

What are the potential health effects?

The best available research doesn’t show any clear differences between menthol and tobacco flavored e-liquid in terms of direct health risks to users.

As mentioned above, research suggests that nontobacco e-liquid flavors are more appealing than tobacco-flavored ones, at least in some groups. This might mean an increase in the risk of nonsmoking youth taking up vaping. But it might also encourage people who smoke to switch to vaping, which can pose fewer risks than smoking. Quitting smoking can also improve the health of other people, by reducing secondhand smoke exposure.

Smoking kills half of its regular users and is the leading cause of preventable death in the U.S. and worldwide. So alternatives that increase chances of successfully quitting smoking can bring substantial health benefits.

To grant authorization for the four new approved products, the FDA had to review an extensive amount of documents and research showing that the benefits of the new products outweighed their risks.

Jamie Hartmann-Boyce is an assistant professor of health promotion and policy at the University of Massachusetts at Amherst.

She receives funding from the National Institutes of Health, the U.S. Food and Drug Administration, and Cancer Research UK, on topics related to tobacco control. She sits on Health Canada's Scientific Advisory Board on Vaping Products and consults for the Truth Initiative.

Adrienne Mayor: Wild animals know how to self-medicate

New Englanders should know that local plants were long used by the region’s Native Americans as medicines before the European colonists arrived. Above, a willow tree, whose bark contains salicylic acid, the active metabolite of aspirin, and used for millennia to relieve pain and reduce fever. Below, many Native American tribes used the leaves of sassafras as medicine to to treat wounds, acne, urinary disorders and high fevers.

.

When a wild orangutan in Sumatra recently suffered a facial wound, apparently after fighting with another male, he did something that caught the attention of the scientists observing him.

The animal chewed the leaves of a liana vine – a plant not normally eaten by apes. Over several days, the orangutan carefully applied the juice to its wound, then covered it with a paste of chewed-up liana. The wound healed with only a faint scar. The tropical plant he selected has antibacterial and antioxidant properties and is known to alleviate pain, fever, bleeding and inflammation.

The striking story was picked up by media worldwide. In interviews and in their research paper, the scientists stated that this is “the first systematically documented case of active wound treatment by a wild animal” with a biologically active plant. The discovery will “provide new insights into the origins of human wound care.”

Fibraurea tinctoria leaves and the orangutan chomping on some of the leaves. Laumer et al, Sci Rep 14, 8932 (2024), CC BY

To me, the behavior of the orangutan sounded familiar. As a historian of ancient science who investigates what Greeks and Romans knew about plants and animals, I was reminded of similar cases reported by Aristotle, Pliny the Elder, Aelian and other naturalists from antiquity. A remarkable body of accounts from ancient to medieval times describes self-medication by many different animals. The animals used plants to treat illness, repel parasites, neutralize poisons and heal wounds.

The term zoopharmacognosy – “animal medicine knowledge” – was invented in 1987. But as the Roman natural historian Pliny pointed out 2,000 years ago, many animals have made medical discoveries useful for humans. Indeed, a large number of medicinal plants used in modern drugs were first discovered by Indigenous peoples and past cultures who observed animals employing plants and emulated them.

What you can learn by watching animals

Some of the earliest written examples of animal self-medication appear in Aristotle’s “History of Animals” from the fourth century BCE, such as the well-known habit of dogs to eat grass when ill, probably for purging and deworming.

Aristotle also noted that after hibernation, bears seek wild garlic as their first food. It is rich in vitamin C, iron and magnesium, healthful nutrients after a long winter’s nap. The Latin name reflects this folk belief: Allium ursinum translates to “bear lily,” and the common name in many other languages refers to bears.

As a hunter lands several arrows in his quarry, a wounded doe nibbles some growing dittany. British Library, Harley MS 4751 (Harley Bestiary), folio 14v, CC BY

Pliny explained how the use of dittany, also known as wild oregano, to treat arrow wounds arose from watching wounded stags grazing on the herb. Aristotle and Dioscorides credited wild goats with the discovery. Vergil, Cicero, Plutarch, Solinus, Celsus and Galen claimed that dittany has the ability to expel an arrowhead and close the wound. Among dittany’s many known phytochemical properties are antiseptic, anti-inflammatory and coagulating effects.

According to Pliny, deer also knew an antidote for toxic plants: wild artichokes. The leaves relieve nausea and stomach cramps and protect the liver. To cure themselves of spider bites, Pliny wrote, deer ate crabs washed up on the beach, and sick goats did the same. Notably, crab shells contain chitosan, which boosts the immune system.

When elephants accidentally swallowed chameleons hidden on green foliage, they ate olive leaves, a natural antibiotic to combat salmonella harbored by lizards. Pliny said ravens eat chameleons, but then ingest bay leaves to counter the lizards’ toxicity. Antibacterial bay leaves relieve diarrhea and gastrointestinal distress. Pliny noted that blackbirds, partridges, jays and pigeons also eat bay leaves for digestive problems.

A weasel wears a belt of rue as it attacks a basilisk in an illustration from a 1600s bestiary. Wenceslaus Hollar/Wikimedia Commons, CC BY